Systems of linear equations: Solving systems of linear equations in Python

Solving systems via linsolve and solve

Solving systems via linsolve and solve

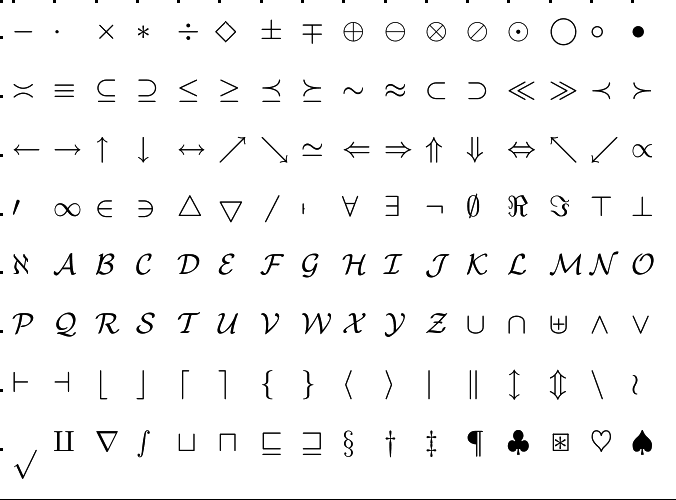

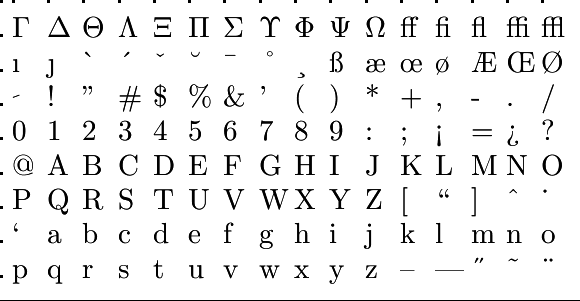

Python, and in particular, the Symbolic Python package abbreviated with SymPy and to import as sympy, has sufficient built-in facilities to resolve system of linear equations numerically or algebraically. We illustrate them using the system \[ \left\{\begin{array}{rrrrrl} x & + & 2y & + & 3z & =\;2\\ & & y & + & 2z & =\;1\\ 3x & + & y & + & z & =\;3 \end{array}\right. \] one solution, namely \[ \cv{x\\y\\z}=

\left(\!\!\begin{array}{r} 1\\-1\\1\end{array}\right)\]

We SymPy will not fully cover here, but you can find computer algebra with companies, that is, not only calculate with numbers, but also symbols. By itself the packages and frolic on the official website www.sympy.org the documentation to consult you come a long way.

We begin SymPy package import with an abbreviated name and the system compared to key in a Python session. In sympy write an equation or not to = == but the expression Eq(x,y) for \(x=y\). Is convenient to write the equation as \(x-y = 0\) and then just xy use. You should also (like in MATLAB) explicitly specify which symbols you want to use. The system of linear equations at a suitable matrix form to be determined with the command linear_eq_to_matrix . Note that the package sympy its own data structure for matrices. For large systems, it is better to numpy package to use, but you have to know linear algebra theory to know what you are doing and be able to work smoothly.

>>> import sympy as sy

>>> x, y, z = sy.symbols('xy z')

>>> var = x,y,z

>>> sys = [x + 2*y + 3*z - 2, y + 2*z - 1, 3*x + y + z - 3]

>>> A, b = sy.linear_eq_to_matrix(sys, var)

>>> A

Matrix([

[1, 2, 3],

[0, 1, 2],

[3, 1, 1]])

>>> b

Matrix([

[2],

[1],

[3]])We can now linsolve use the vector \(\vec{v}\) be found so that \(A\vec{v}=\vec{b}\)

>>> opl = sy.linsolve((A,b), var); opl

{(1, -1, 1)}The solution of the original system stands for \(\vec{v}=\cv{x \\ y \\ z}\) and we would have also found this solution with solve without going first over to the matrix form.

>>> sol = sy.solve(sys,var); opl

{x: 1, y: -1, z: 1}

>>> opl[x], opl[y], opl[z]

(1, -1, 1)Advantage of the matrix form is that you can better control the theory for solving equations. For example, you can apply Gaussian elimination to solve the system. The name of the function rref is an acronym for reduced row echelon form. An alternative that also involves Gaussian elimination is the instruction solve_linear_system.

>>> Ab = A.row_join(b); Ab # the augmented matrix

Matrix([

[1, 2, 3, 2],

[0, 1, 2, 1],

[3, 1, 1, 3]]) >>> sy.solve_linear_system(Ab, x, y, z)

{x: 1, y: -1, z: 1}

>>> GT = Ab.rref(); GT[0] # computation of the reduced echelon form

Matrix([

[1, 0, 0, 1],

[0, 1, 0, -1],

[0, 0, 1, 1]])

>>> GT[0][:, 3] % selection of the fourth column

Matrix([

[ 1],

[-1],

[ 1]])

\(\phantom{x}\)

As mentioned, you can better use the NumPy package for numerical computations, but then you have to organize everything in matrix calculations. The example might look as follows. Note that the NumPy package, just like its big brother scipy (an acronym for Scientific Python) has no built-in commands for calculating the reduced echelon form and therefore we switch between this package and SymPy to do the job.

>>> import numpy as np

>>> A = np.array([[1, 2, 3], [0,1,2], [3, 1, 1]]); print(A)

[[1 2 3]

[0 1 2]

[3 1 1]]

>>> b = np.array([[2],[1],[3]]); print(b)

[[2]

[1]

[3]]

>>> opl = np.linalg.solve(A,b); print(opl)

[[ 1.]

[-1.]

[ 1.]]

>>> Ab = np.hstack((A,b)) # gerande matrix

>>> print(Ab)

[[1 2 3 2]

[0 1 2 1]

[3 1 1 3]]

>>> import sympy as sy

>>> Abmat = sy.Matrix(Ab)

>>> GT = np.array(Abmat.rref()[0]); print(GT) # reduced echelon form via sympy

[[1 0 0 1]

[0 1 0 -1]

[0 0 1 1]]